Instant messaging apps with end-to-end encryption love to brag about how they protect their users’ privacy, even in the face of mandatory decryption laws. Signal’s homepage claims:

we can’t read your messages or listen to your calls, and no one else can either.

But if you’re one of the many users installing Signal in a typical fashion (via the App Store on an iPhone), it can certainly be modified to send your messages to someone else, and you will be none the wiser. This kind of modification is referred to as a “backdoor”.

This post focuses on backdoors that software developers can be compelled to create under the Australian Assistance and Access Act. The Act allows various state agencies in Australia to compel industry to help safeguard national security, the interests of Australia, and enforce Australian and foreign criminal law. An obvious and intended application is to gain access to communications of people under state surveillance. This legislation was passed back in 2018, so it’s not new, but the implications it has for privacy are still relevant today.

Signal devs on backdoors

I’m going to pick on Signal a lot in this post, because I’m familiar with it. A lot of the problems I describe would apply equally to Telegram, WhatsApp, and other messaging apps with end-to-end encryption. While I’ll describe ways the developers could do better, know that the real problem here is legislation like the Assistance and Access Act.

Back in 2018, Joshua Lund (jlund@) of Signal brags in a blog post reacting to the Act, “we can’t include a backdoor in Signal”. He goes on to say:

Reproducible builds and other readily accessible binary comparisons make it possible to ensure the code we distribute is what is actually running on user’s devices. People often use Signal to share secrets with their friends, but we can’t hide secrets in our software.

However, if you actually dig into the details of Signal’s issue for reproducible iOS builds and the thread the discussion was moved to, it’s apparent that verifying a Signal binary installed via the App Store is actually quite complex. Apple modifies the binaries it serves via the App Store, so verification involves backing up the iPhone with iTunes to get the .ipa file, unpacking it, decrypting the binary using a jailbroken device, and comparing that to a known-good binary. Theoretically the Signal devs (or someone else with a jailbroken phone) could publish a list of known-good hashes of the Apple-modified .ipa files, but they don’t. They don’t even make the binaries they upload to the App Store easily available: try finding them from the Signal download page or the GitHub releases page.

Most users are taking the claims on Signal’s home page that “we can’t read your messages or listen to your calls, and no one else can either” at face value, without building from source or going through extra steps to verify binaries. These claims are wrong: there is a real risk of backdoored binaries when trusting the App Store.

How Australian police can read all your messages

- The police branch the Signal app, adding a backdoor that sends the user’s message history to the police. They bump the minor version.

- The police issue a “technical assistance notice” under the Assistance and Access Act to Apple, demanding that the App Store serve the backdoored Signal binary to (just) your device or account next time the Signal devs upload an official version.

- The Signal devs release a new official version and upload it to the App store. Existing Signal clients nag users to upgrade. You do so, by opening the App Store and upgrading Signal.

- The police now have your message history.

There are variations to this:

- the police could request the Signal devs to upload the backdoored version to the App Store for everyone (while simultaneously uploading an innocent build to GitHub). Access to messages could be gated on a triggering mechanism that only the police would have access to (for example, a cryptographically-signed message from a state-controlled account). These code changes could be detected via the jailbroken-device method, but it doesn’t seem like anyone is routinely monitoring this.

- the police could require Apple to make even broader changes, e.g. to side-load the backdoor such that it’s not present in the .ipa downloaded by iTunes, making verification completely impossible.

What about the limitations in the Act?

The Assistance and Access Act includes limitations against compelling providers to include “systemic weaknesses” and “systemic vulnerabilities” in section 317ZG. The Australian government points to these as prohibiting backdoors from being introduced under the Act.

The language the Act actually uses centres around “systemic” (not okay) versus targeted (okay). From section 317B:

“systemic weakness” means a weakness that affects a whole class of technology, but does not include a weakness that is selectively introduced to one or more target technologies that are connected with a particular person

Section 317ZG also says:

A technical assistance request, technical assistance notice or technical capability notice must not… jeopardise the security of any information held by any other person.

The government could argue that the backdoor approaches I described above are targeted to particular individuals or devices, and not systemic. The Attorney-General makes the ultimate decision on whether to proceed with a Technical Assistance Notice or a Technical Capability Notice, merely “having regard” to many of the provisions in section 317ZG. Australia’s law-enforcement agencies have a record of heavy-handedness and incompetence, with no regrets by former Attorney-Generals.

Aggressive updates make it worse

Signal’s practice of aggressively pushing updates makes it easier for a backdoored binary to get installed. It normalizes updating frequently. Every update is an opportunity for a backdoored binary to be installed. Frequent upgrades also encourage lazy behaviour: installing from the App Store, rather than building from source or attempting binary verification.

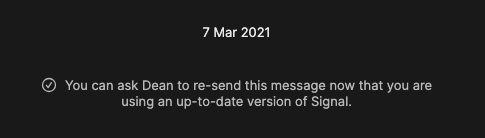

Signal is pushing these updates in two ways; one is a “newer version is available” nag, the other is compatibility-breaking changes:

There’s a balance to maintain here: it’s good for users to pick up fixes to privacy vulnerabilities. But those are rare and should be clearly flagged as critical updates.

What Signal developers should do

The Signal devs could increase the trust in the iOS binaries installed via the App Store, and increase awareness of the risks of backdoored binaries through a few actions:

- Actually create a reproducible iOS build process for the pre-Apple binary they upload, like they do for Android

- Verify the post-Apple binary that the App Store serves (using a jailbroken device, and an open source, reproducible process), and publish the list of known-good hashes

- Stop nagging users to update unless it’s for privacy-critical changes

- Stop claiming that end-to-end encryption is a complete solution to privacy